You are here: Start » Function Reference » Computer Vision » Template Matching » LocateMultipleObjects_NCC

LocateMultipleObjects_NCC

| Header: | AVL.h |

|---|---|

| Namespace: | avl |

| Module: | MatchingBasic |

Finds all occurrences of a predefined template on an image by analysing the normalized correlation between pixel values.

Applications: Detection of objects with blurred or unclear edges. Often one of the first filters in a program.

Syntax

void avl::LocateMultipleObjects_NCC ( const avl::Image& inImage, atl::Optional<const avl::ShapeRegion&> inSearchRegion, atl::Optional<const avl::CoordinateSystem2D&> inSearchRegionAlignment, const avl::GrayModel& inGrayModel, int inMinPyramidLevel, atl::Optional<int> inMaxPyramidLevel, bool inIgnoreBoundaryObjects, float inMinScore, float inMinDistance, atl::Optional<float> inMaxBrightnessRatio, atl::Optional<float> inMaxContrastRatio, atl::Array<avl::Object2D>& outObjects, atl::Optional<int&> outPyramidHeight = atl::NIL, atl::Optional<avl::ShapeRegion&> outAlignedSearchRegion = atl::NIL, atl::Array<avl::Image>& diagImagePyramid = atl::Dummy<atl::Array<avl::Image>>(), atl::Array<avl::Image>& diagMatchPyramid = atl::Dummy<atl::Array<avl::Image>>(), atl::Array<atl::Array<float>>& diagScores = atl::Dummy<atl::Array<atl::Array<float>>>() )

Parameters

| Name | Type | Range | Default | Description | |

|---|---|---|---|---|---|

|

inImage | const Image& | Image on which model occurrences will be searched | ||

|

inSearchRegion | Optional<const ShapeRegion&> | NIL | Range of possible object centers | |

|

inSearchRegionAlignment | Optional<const CoordinateSystem2D&> | NIL | Adjusts the region of interest to the position of the inspected object | |

|

inGrayModel | const GrayModel& | Model of objects to be searched | ||

|

inMinPyramidLevel | int | 0 - 12 | 0 | Defines the lowest pyramid level at which object position is still refined |

|

inMaxPyramidLevel | Optional<int> | 0 - 12 | 3 | Defines the total number of reduced resolution levels that can be used to speed up computations |

|

inIgnoreBoundaryObjects | bool | False | Flag indicating whether objects crossing image boundary should be ignored or not | |

|

inMinScore | float | -1.0 - 1.0 | 0.7f | Minimum score of object candidates accepted at each pyramid level |

|

inMinDistance | float | 0.0 -  |

10.0f | Minimum distance between two found objects |

|

inMaxBrightnessRatio | Optional<float> | 1.0 -  |

NIL | Defines the maximal deviation of the mean brightness of the model object and the object present in the image |

|

inMaxContrastRatio | Optional<float> | 1.0 -  |

NIL | Defines the maximal deviation of the brightness standard deviation of the model object and the object present in the image |

|

outObjects | Array<Object2D>& | Found objects | ||

|

outPyramidHeight | Optional<int&> | NIL | Highest pyramid level used to speed up computations | |

|

outAlignedSearchRegion | Optional<ShapeRegion&> | NIL | Transformed input shape region | |

|

diagImagePyramid | Array<Image>& | Pyramid of iteratively downsampled input image | ||

|

diagMatchPyramid | Array<Image>& | Candidate object locations found at each pyramid level | ||

|

diagScores | Array<Array<float>>& | Scores of the found objects at each pyramid level |

Optional Outputs

The computation of following outputs can be switched off by passing value atl::NIL to these parameters: outPyramidHeight, outAlignedSearchRegion.

Read more about Optional Outputs.

Description

The operation matches the object model, inGrayModel, against the input image, inImage. The inSearchRegion region restricts the search area so that only in this region the centers of the objects can be presented. The inMinScore parameter determines the minimum score of the valid object occurrence. The inMinDistance parameter determines minimum distance between any two valid occurrences (if two occurrences lie closer than inMinDistance from each other, the one with greater score is considered to be valid).

The computation time of the filter depends on the size of the model, the sizes of inImage and inSearchRegion, but also on the value of inMinScore. This parameter is a score threshold. Based on its value some partial computation can be sufficient to reject some locations as valid object instances. Moreover, the pyramid of the images is used. Thus, only the highest pyramid level is searched exhaustively, and potential candidates are later validated at lower levels. The inMinPyramidLevel parameter determines the lowest pyramid level used to validate such candidates. Setting this parameter to a value greater than 0 may speed up the computation significantly, especially for higher resolution images. However, the accuracy of the found object occurrences can be reduced. Larger inMinScore generates less potential candidates on the highest level to verify on lower levels. It should be noted that some valid occurrences with score above this score threshold can be missed. On higher levels score can be slightly lower than on lower levels. Thus, some valid object occurrences which on the lowest level would be deemed to be valid object instances can be incorrectly missed on some higher level. The diagMatchPyramid output represents all potential candidates recognized on each pyramid level and can be helpful during the difficult process of the proper parameter setting.

To be able to locate objects which are partially outside the image, the filter assumes that there are only black pixels beyond the image border.

The outObjects.Point array contains the model reference points of the matched object occurrences. The corresponding outObjects.Angle array contains the rotation angles of the objects. The corresponding outObjects.Match array provides information about both the position and the angle of each match combined into value of Rectangle2D type. Each element of the outObjects.Alignment array contains informations about the transform required for geometrical objects defined in the context of template image to be transformed into object in the context of corresponding outObjects.Match position. This array can be later used e.g. by 1D Edge Detection or Shape Fitting categories filters.

Hints

- If an object is not detected, first try decreasing inMaxPyramidLevel, then try decreasing inMinScore. However, if you need to lower inMinScore below 0.5, then probably something else is wrong.

- If all the expected objects are correctly detected, try achieving higher performance by increasing inMaxPyramidLevel and inMinScore.

- Please note, that due to the pyramid strategy used for speeding-up computations, some objects whose score would finally be above inMinScore may be not found. This may be surprising, but this is by design. The reason is that the minimum value is used at different pyramid levels and many objects exhibit lower score at higher pyramid levels. They get discarded before they can be evaluated at the lowest pyramid level. Decrease inMinScore experimentally until all objects are found.

- If precision of matching is not very important, you can also gain some performance by increasing inMinPyramidLevel.

- If the performance is still not satisfactory, go back to model definition and try reducing the range of rotations and scaling as well as the precision-related parameters: inAnglePrecision and inScalePrecision.

- Define inSearchRegion to limit possible object locations and speed-up computations. Please note, that this is the region where the outObject.Point results belong to (and NOT the region within which the entire object has to be contained).

- Smoothing of the input image can significantly improve performance of this tool.

Examples

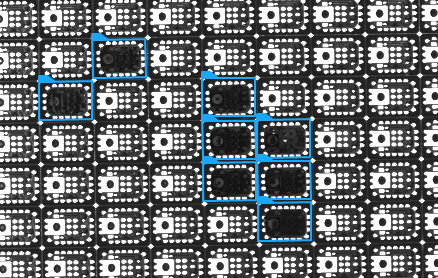

Locating multiple objects with the gray-based method (inMaxPyramidLevel = 2). Please note, that the edge-based method might not work properly here, because what we are looking for (a missing chip) is a sub-object of what we are not looking for (a correct chip).

Remarks

Read more about Local Coordinate Systems in Machine Vision Guide: Local Coordinate Systems.

Additional information about Template Matching can be found in Machine Vision Guide: Template Matching

Hardware Acceleration

This operation is optimized for SSE2 technology for pixels of type: UINT8.

This operation is optimized for AVX2 technology for pixels of type: UINT8.

This operation is optimized for NEON technology for pixels of type: UINT8.

This operation supports automatic parallelization for multicore and multiprocessor systems.

See Also

- LocateSingleObject_NCC – Finds a single occurrence of a predefined template on an image by analysing the normalized correlation between pixel values.

- CreateGrayModel – Creates a model for NCC or SAD template matching.

- LocateMultipleObjects_SAD – Finds multiple occurrences of a predefined template on an image by analysing the Square Average Difference between pixel values.